Research and Publications

Our collaboration with esteemed industry partners and the community has facilitated the creation of numerous research projects spanning various application domains. The IAPI Research Lab welcomes students, researchers, and companies to delve into collaborative opportunities and research endeavors. To initiate discussions regarding potential projects, please reach out to us.

Explainable AI in Urban Scene Segmentation

Explainable Artificial Intelligence (XAI) in scene segmentation plays a crucial role in making deep learning models more transparent and trustworthy, especially in safety-critical applications like autonomous driving. Scene segmentation involves labeling each pixel in an image, and XAI helps users understand why a model assigns certain labels to specific regions. By visualizing attention maps, feature importance, or decision pathways, XAI techniques uncover the reasoning behind model predictions, enabling better debugging, model validation, and ethical deployment. This transparency is essential to build confidence in AI systems and ensure accountability in real-world decision-making. The graduate students from IAPI research lab, Tanmay Sunil Hatkar and Abhinav Pandey have developed and experimented Urban Scene Segmentation model and generates its interpretation through Grad-CAM. There are two notable research contributions from this work contributed by IAPI-RL.

- Tanmay Sunil Hatkar and Saad B. Ahmed, “Urban Scene Segmentation and Cross-Dataset Transfer Learning using SegFormer”, presented at ICMVA 2025, Australia.

- Tanmay Sunil Hatkar, Abhinav Pandey and Saad B. Ahmed, “Explainable AI for Scalable and Cross-Domain Scene Segmentation”, under review at IEEE Transactions on Artificial Intelligence.

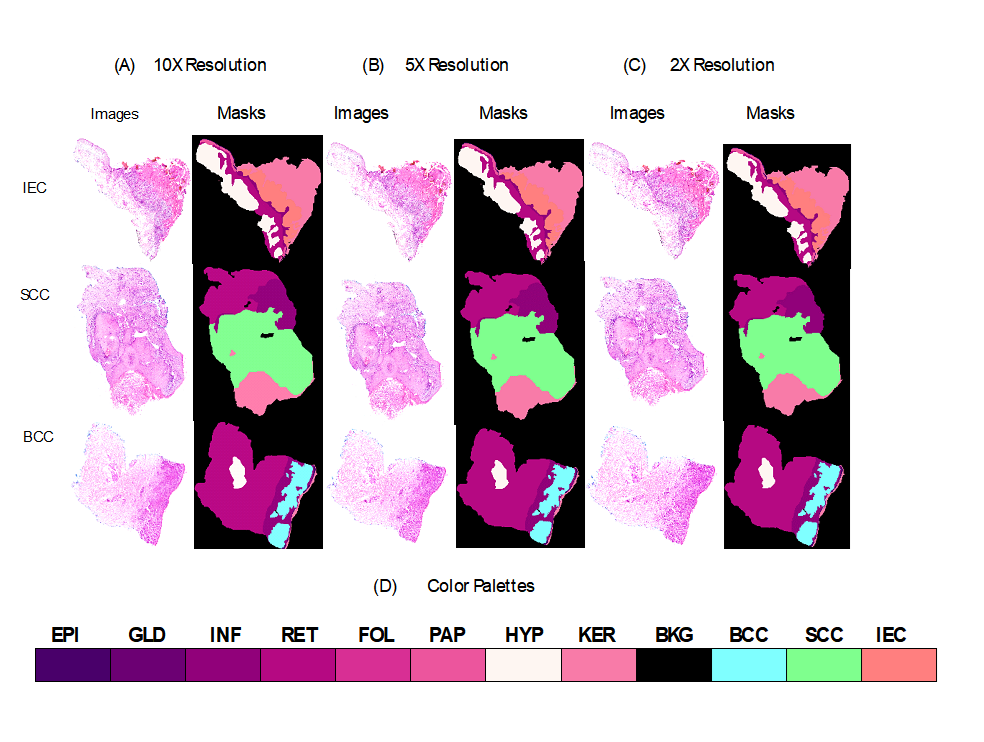

Cancer Detection and Segmentation

My research on skin cancer detection and segmentation focuses on developing deep learning models that accurately identify and delineate cancerous regions in dermoscopic and histopathological images. By leveraging transformer-based and interpretable semantic segmentation architectures, the aim is to improve early diagnosis through precise localization of malignant lesions while maintaining model transparency. The work integrates explainable AI techniques to visualize model decision-making, ensuring that predictions are clinically interpretable and reliable. This research contributes to advancing automated diagnostic tools that support dermatologists in making informed and timely decisions. A MITACS GRI Sana Fatima from National University of Science and Technology (NUST), Pakistan had joined us in Summer 2024 and worked on skin histology images. Another undergraduate student Jair Fernando Vesquez Ramos from Universidad Nacional de Ingeniería – Peru joined IAPI-RL research lab in January 2025 through Emerging Leaders in Americas Program (ELAP), he worked on Liver tumor segmentation. These work enabled us to contribute in following manner.

- Sana Fatima, Muhammad Usman Akram, Sabah Mohammad, and Saad Bin Ahmed, “Deep Learning in Dermatopathology: Applications for Skin Disease Diagnosis and Classification”, accepted and in-press in Discover Applied Sciences.

- Sana Fatima, Anum Abdul Salam, M. Usman Akram, Adeel M Syed, Sabah Mohammad, and Saad Bin Ahmed, “Incremental Learning Approach for Semantic Segmentation of Skin Histology Images”, Under-review in Scientific Reports.

- Jair Fernando Vasquez Ramos, M. Mazhar Rathore, Saad B. Ahmed, “Attention-Driven Semantic Segmentation for Liver Tumor Detection”, presented in Health Comm Conference.

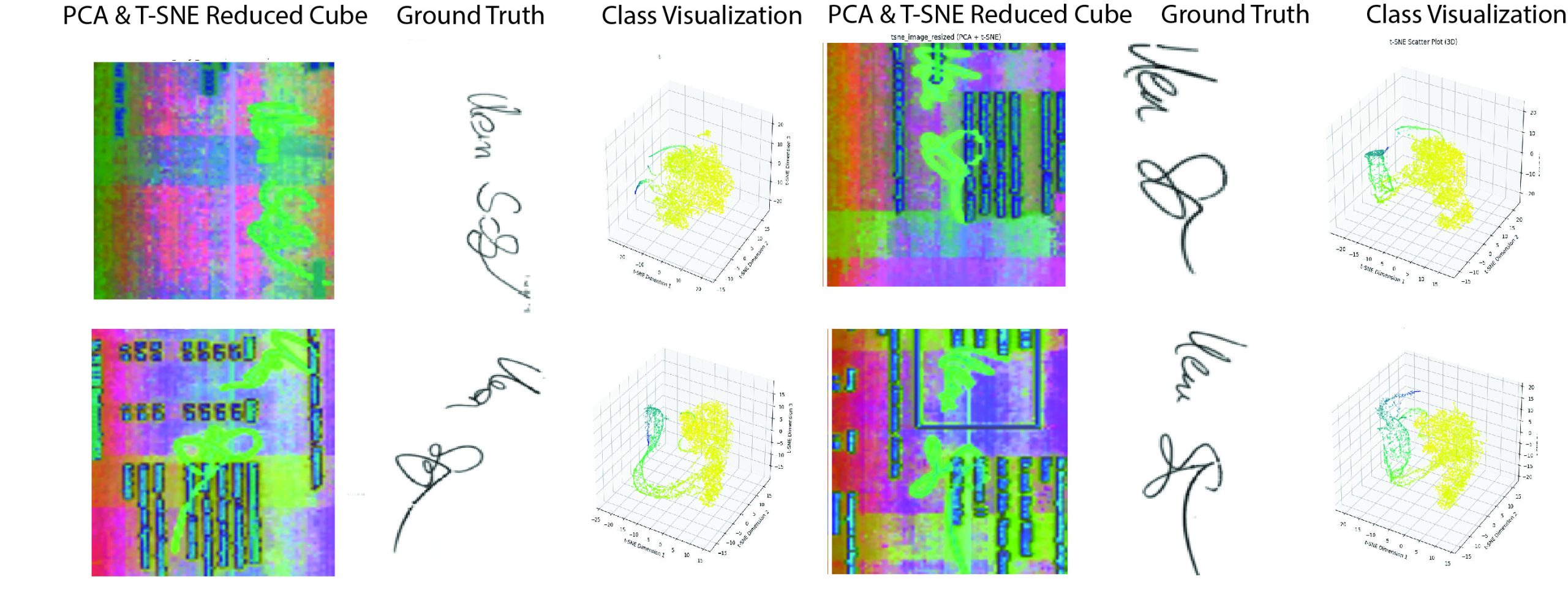

Hyperspectral Image Analysis

Hyperspectral image analysis is a powerful research area that enables the extraction of detailed spectral and spatial information from complex scenes, far beyond what is possible with conventional imaging. Its ability to capture hundreds of contiguous spectral bands makes it invaluable for applications in remote sensing, environmental monitoring, agriculture, medical diagnostics, and material identification. My research focuses on developing advanced machine learning and deep learning techniques to efficiently analyze high-dimensional hyperspectral data, addressing challenges such as spectral redundancy, noise, and limited labeled data. By leveraging spectral-spatial models, feature augmentation, and explainable AI, the goal is to enhance the interpretability, accuracy, and robustness of hyperspectral analysis, contributing to real-world decision-making across diverse domains. Two graduate students Hasan Irtaza Mirza and Zainab Zaman from National University of Science and Technology (NUST), Pakistan worked on hyperspectral textual image analysis in overlapping regions. One graduate student Mohammad Salman Khan is working on developing a deep learning approach for hyperspectral data analysis. Hyperspectral imaging is an evolving research area and have solid applications in different domains. We have investigated and conducted few experiments in the field of hyperspectral textual images and our contributions are as follows,

- Zainab Zaman, Saad Bin Ahmed, Muhammad Imran Malik, “Analysis of Hyperspectral Data to Develop an Approach for Document Images” in Sensors, MDPI, 2023.

- Hasan Irtaza Mirza, Saad Bin Ahmed, Roberto Solis Oba, and Muhammad Imran Malik, “Endmember Analysis of Overlapping Handwritten Text in Hyperspectral Document Images“, IEEE Access, 2024.

- Zainab Zaman, Muhammad Imran Malik, Saad Bin Ahmed, “Signature Segmentation in Hyperspectral Document Images with UMAP Feature Embedding and Transformers” submitted in ACPR 2025.

- Zainab Zaman, Muhammad Imran Malik, Saad Bin Ahmed, “Segmentation of Overlapping Pixels in Multi-spectral Document Images by Statistical Techniques” under review in IJDAR.

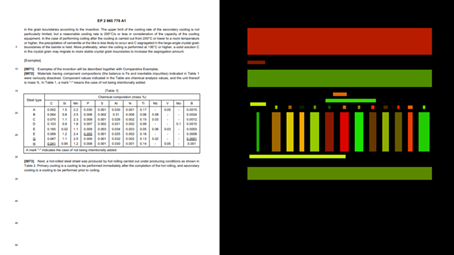

Multimodal Document Image Analysis

Multimodal Document Image Analysis (MDIA) is a research area that focuses on understanding and extracting information from document images by integrating multiple sources of information, or “modalities.” These typically include the visual appearance of the document (layout, structure, fonts, etc.), the textual content (extracted via OCR or handwriting recognition), and often structural information such as table grids or field arrangements. Unlike traditional document processing, which may rely solely on text or image features, MDIA utilizes the complementary strengths of these modalities to improve accuracy, robustness, and contextual understanding.

Documents in the real world like forms, invoices, receipts, academic papers, and handwritten notes, often contain a mixture of visual elements (like layout or handwriting), semantic content (text and meaning), and logical structures (e.g., tabular data, section headings). Relying on only one modality (e.g., raw OCR text) can lead to errors, especially in noisy scans, complex layouts, or multi-language documents. MDIA improves performance by considering both what is written and how it is presented. For example, in a form, the position of the word “Total” next to a number is as important as the text itself. Few HQPs are working under this project. A graduate student Muhammad Zahid Iqbal worked on Table detection and content extraction. One undergraduate student Olesia Omelchuk is from Ukraine joined our lab on MITACS GRI and is working on multimodal document image analysis. She is investigating about the existing problems in multimodal document image analysis and trying to present a unified deep learning model for various challenges encountered in this particular field of research.

- Iqbal, Muhammad Zahid, Nitish Garg, and Saad Bin Ahmed. 2025. “Table Extraction with Table Data Using VGG-19 Deep Learning Model” Sensors 25, no. 1: 203. https://doi.org/10.3390/s25010203

Deep Learning in Medical Research

Deep learning has emerged as a transformative approach in medical research, offering unprecedented capabilities in analyzing complex biomedical data. Its application spans across medical imaging, genomics,

drug discovery, and electronic health records (EHR) analysis. In medical imaging, convolutional neural networks (CNNs) have achieved expert-level accuracy in tasks such as tumor detection, organ segmentation, and disease classification in modalities like MRI, CT, and histopathology images. Recurrent and transformer-based models have also enabled progress in sequential data analysis, such as patient history modeling and temporal disease progression prediction. Deep learning has facilitated faster, more accurate diagnosis, personalized treatment planning, and early disease detection. However, challenges remain in ensuring model interpretability, generalization across populations, and addressing biases in clinical datasets. Ongoing research focuses on explainable AI, multimodal learning, and integrating clinical domain knowledge to make deep learning models more reliable and applicable in real-world medical settings.

We have collaborated with the National University of Computer and Emerging Sciences, Pakistan and Istinye University, Istanbul, Turkey.

- Sidra Safdar, Akhtar Jamil, Alaa Ali Hameed, and Saad B. Ahmed, “Self-Supervised Representation Learning for Anomaly Detection in Brain MRI” in 4th International Conference on Computing, IoT and Data Analytics July 17-18, 2025